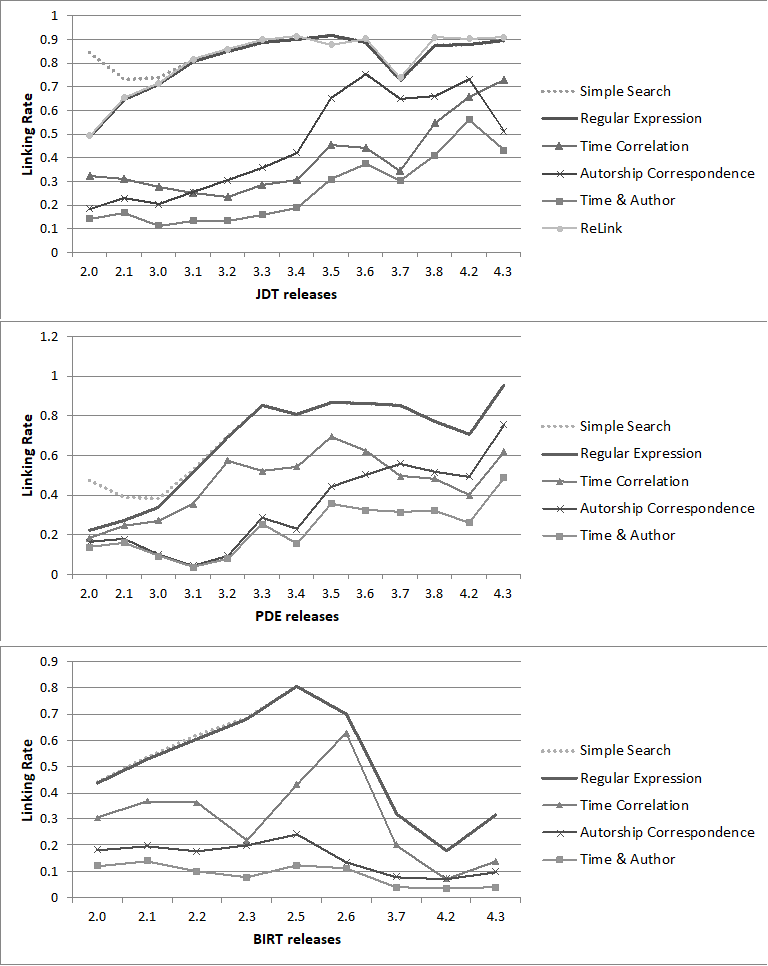

Software defect prediction research relies on data that must be collected from otherwise separate repositories. To achieve greater generalization of the results, standardized protocols for data collection and validation are necessary. This paper presents an exhaustive survey of techniques and approaches used in the data collection process. It identifies some of the issues that must be addressed to minimize dataset bias and also provides a number of measures that can help researchers to compare their data collection approaches and evaluate their data quality. Moreover, we present a data collection procedure that uses a bug-code linking technique based on regular expression. The detailed comparison and root cause analysis of inconsistencies with a number of popular data collection approaches and their publicly available datasets, reveals that our procedure achieves the most favorable results. Finally, we implement our data collection procedure in a data collection tool we name the Bug-Code (BuCo) Analyzer.